A/B Testing Limitations for Businesses

A/B testing is treated as the gold standard for decision-making. But for most businesses, it's inefficient, unreliable, and misleading. Here's when it actually works.

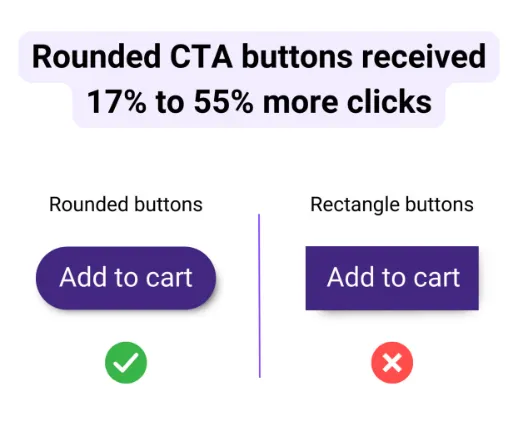

A study claims curved CTA buttons received 17-55% higher clicks than sharp-angled buttons. Does that mean you should change your buttons? Probably not — and that tells you everything wrong with how most businesses use A/B testing.

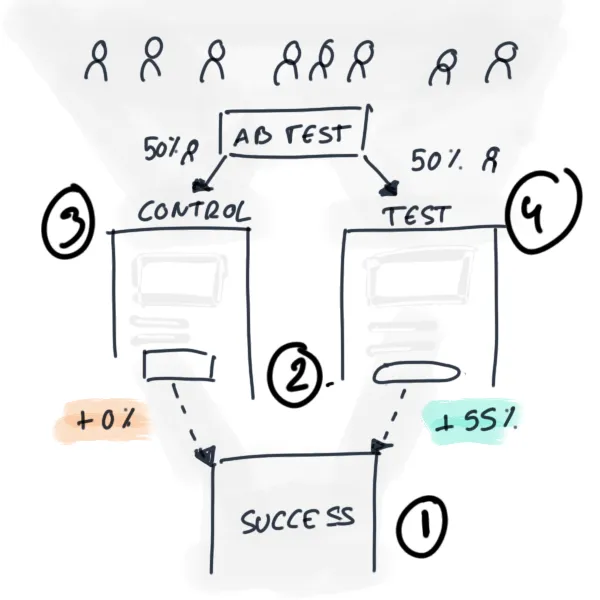

What is A/B Testing?

A/B testing involves comparing a control version against a modified test version to measure impact on a specific goal. It has four components: a goal, a change, a control version, and a test version.

Why Tech Companies Embrace A/B Testing

Tech giants like Google, Meta, and Microsoft run thousands of annual A/B tests because:

- Unbiased user behavior — Tests capture authentic user actions without laboratory bias

- Scientific rigor — Mirrors the scientific method used across disciplines

- Data accessibility — Digital environments enable rapid data collection from large populations

- Stakeholder consensus — Science-backed decisions gain easy approval

Critical Limitations

Limited Context

A/B tests measure narrow, specific scenarios and cannot predict downstream effects on the user journey.

Disconnect from Business Impact

Increased clicks don't necessarily translate to revenue growth or customer lifetime value. Incremental improvements accumulated across multiple tests rarely compound into meaningful business growth.

Time Consumption

For businesses with fewer than 100,000 monthly visitors, A/B testing requires months to achieve statistical significance — making results potentially obsolete before implementation.

Reliability Issues

Running an identical A/A test can produce a false 3% uplift, demonstrating how infrastructure problems can invalidate results entirely.

Low Success Rates

"Only 8% of experiments run had an impact on revenue. 92% were just failing," with approximately 26% of successful results being false positives.

When A/B Testing Fails

The methodology proves counterproductive for organizations with:

- Fewer than 100,000 monthly visitors

- High user acquisition costs

- No established experimentation culture

- Urgent growth demands

Alternative Approaches

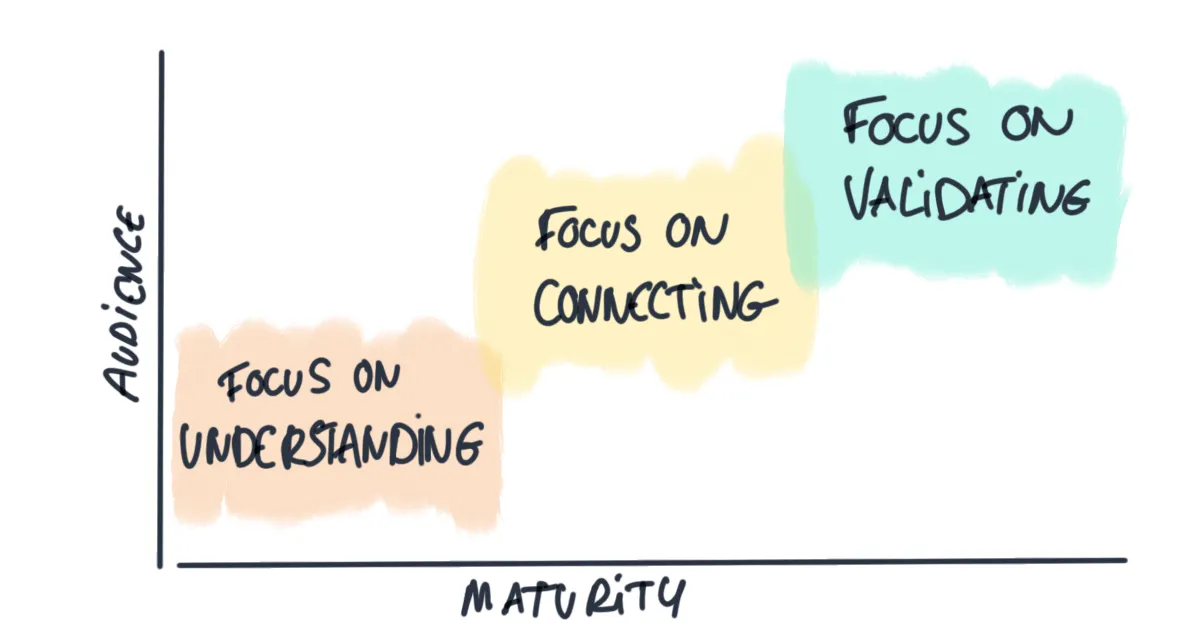

Rather than pursuing A/B testing exclusively, match experimentation to your maturity stage:

- Low maturity/audience: Conduct user interviews to understand desires and pain points

- Middle maturity/audience: Identify patterns across multiple customer conversations

- High maturity/audience: Leverage behavioral data through controlled experiments

The Shift to User-Driven Decision Making

Transition from purely data-driven to user-centered approaches:

- Contextualize numerical results within qualitative understanding

- Ask users to explain why data shows certain outcomes

- Recognize that universal truths about design elements don't exist

Conclusion

While A/B testing provides reassurance through quantified results, it's inefficient for most startups and small businesses. Match experimentation methods to your lifecycle stage and audience size. Prioritize larger strategic changes over incremental tweaks — and combine data insights with direct user understanding rather than over-relying on statistical significance alone.